In case you spent your weekend watching closing ceremonies and not reading tech news, there was a lot of buzz around a security problem in Apple products. On Friday, Apple released an emergency update for iOS7 that fixed a severe vulnerability in their SSL/TLS implementation on the iPhone.

For those who are not technically inclined, SSL (Secure Sockets Layer) and TLS (Transport Layer Security) are the encryption protocols underlying, among many things, the little lock icon you see in the upper right corner of your browser. This encryption protects you from eavesdroppers when logging into any secure site, like your bank account. It also protects you from actors like the NSA (and other governments) scooping up your emails in bulk when you’re … well … anywhere. After Apple released the emergency update for iPhone, security firm CrowdStrike examined the patch and reverse engineered the vulnerabilities it was addressing, only to find out that it repaired some pretty significant parts of the iPhone operating system. They also found that the same vulnerability exists in Apple’s OS X operating system meaning that the problem extends to Mac OS X laptops/desktops, not just iPhones.

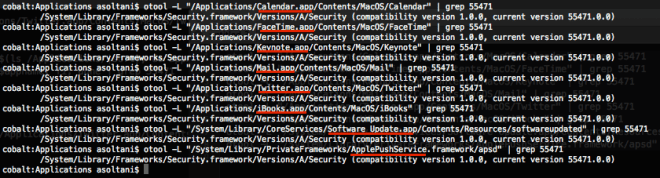

Adam Langley subsequently published a very helpful write up of the problem explaining that a programming shortcut “goto fail;” appears twice, which has the effect of skipping over one of the key security checks necessary for the underlying SecureTransport (SSL/TLS) protocol. The exact ‘diff’ between the working and vulnerable versions of the code is seen here. The severity of the problem doesn’t immediately come across in Adam’s blog post, but it’s pretty huge. Effectively, this vulnerability allows a moderately sophisticated attacker to monitor your communications with even the most secure sites and services. Specifically, many of the core programs on iOS and OS X rely on this library for communications, which means ANY app that relies on this library (not just Safari) was vulnerable. For example, when your Calendar or Mail.app synced to Gmail, those communications were vulnerable to eavesdroppers on the network as a result of this error.

The problem seems to affect Apple’s iOS version 6 and above and Apple OS X Mavericks version 10.9 and above (based on the earlier version of the code which doesn’t have the #gotofail bug).

The way this has played out doesn’t bode well for security in an age where everyone, from the kid at your Wi-Fi cafe to the NSA, wants to monitor your communications. For example, Adam also pointed out that it was a bit underhanded of Apple to publish the update and not explain what it was fixing, a sentiment echoed by many researchers who were also annoyed that Apple announced this news on a Friday. There are companies selling commercial tools designed to exploit this exact kind of weakness by infecting a software update ‘on the fly’ (i.e. as you’re downloading them), making this a particularly sensitive topic. Releasing a partially complete update for only one of your product lines (iPhones) without fixing the same problem in your other products (Mac OS X) is pretty irresponsible, and Apple deserves the negative attention it is getting from the tech community. I am not sure why the developers at Apple chose to roll the patch out this way–I can only imagine that there were either major compatibility issues on OS X, or that someone made the call to roll out the patch for iPhone hastily to at least fix the problem for their mobile.

Sadly these types of programming errors have become all too common. This one seems to be just another in a line of similarly suspicious vulnerabilities like the Linux Kernel Bakdoor of 2003 and the Debian OpenSSL Bug, all of which raise questions about what might be happening behind the scenes and whether something more nefarious is at play. Some have even speculated that the government is involved, making this vulnerability an intentional back door. This accusation has come up in the past in similar situations and, while I don’t put a lot of stock in conspiracy theories, I agree with John Gruber at daringfireball that this is a legitimate question in this climate. Given the work they do, it is hard to believe that NSA wasn’t at least aware of (and actively exploiting) this vulnerability (news that would have gone unreported since this was released after Snowden left the NSA, and therefore not part of the files he released). As a result, I do think this should be investigated further for any evidence of foul play. While I believe the NSA may not have added this specific back door, these are exactly the types of vulnerabilities they discover and exploit. The question is, if NSA did come across this bug under their defensive information security mission (as Gruber speculates), would they turn it over to Apple or would they keep it to use under their offensive mission given the broad capability it gives them? Their track record says they wouldn’t.

My biggest concern is that this bug existed for about 5 months and went undetected by Apple. As Adam points out, this can be a hard bug to catch although many experts (including Adam) have indicated that certain compiler checks could have alerted the developer. More importantly, most software developers now enable unit-tests to check for exactly these types of problems — particularly given the fact that we know most of the types of attacks to SSL. In fact, Apple requires 3rd party developers to address problems like this before loading apps to the store, suggesting that a basic security check should have been on their to-do list for their own platform as well. The developers at Apple should be able to hold themselves to the same standards they set for third parties.

As of the writing of this post, there is still not an official fix for this issue in OS X. Some researchers have posted an unofficial patch that sophisticated users can apply (WARNING: use at your own risk) and Apple has said they will have an official one “very soon” (I’d personally recommend waiting for the official fix). Typically, the advice in a situation like this would be to use a Virtual Private Network, but the Mac VPN agent seems to rely on the vulnerable SSL library as well.

In the famous words of Kristin Paget, well respected security researcher and former Apple employee: Dear Apple, Fix your shit. In the meantime, concerned Mac OS X users should use Chrome or Firefox browser for their online activities and disable background services (like Mail.app or iCloud), especially when they’re using a network they don’t trust (e.g. at an Internet cafe). And iPhone users should be sure to update their systems as soon as updates are available if you havent’ already. Until then – users can keep checking http://hasgotofailbeenfixedyet.com/ for updates.

2/25 UPDATE:

The OS X Update 10.9.2 is finally out. It fixes the #gotofail TLS/SSL bug, however the update does not directly mention the problem (though they confirm that it is fixed in an unlinked security note buried elsewhere on their site. I’m glad the update is posted, but it still surprises me that Apple waited 3 extra days to fix CVE-2014-1266 with a version update rather than just do a timely security update.

Related stories:

Ars Technica: Extremely critical crypto flaw in iOS may also affect fully patched Macs

Forbes: Apple’s ‘Gotofail’ Security Mess Extends To Mail, Twitter, iMessage, Facetime And More

IT World: Apple encryption mistake puts many desktop applications at risk

The Verge: The dangers behind Apple’s epic security flaw